Executive Summary

- Who this is for: Enterprise Architects, CTOs, Solution Architects, Architecture Governance Teams

- Problem it solves: Organizations deploying AI agents have no structural record of who authorized the agent to act, what decisions it can make autonomously, and who is accountable when it is wrong

- Key outcome: A practical method to extend Architectural Decision Records into an Agentic Decision Log — creating agent accountability without building new governance infrastructure

- Time to implement: 30–60 days to introduce the Agentic Decision Log as a governance practice

- Business impact: Clear agent accountability, reduced regulatory exposure under the EU AI Act, and architecture governance that extends naturally into agentic systems

The Captain Who Signed the Logbook

Every commercial vessel has a logbook.

Every course deviation is recorded.

Every handoff between captain and autopilot is documented.

Not because the autopilot cannot be trusted.

But because trust requires a record.

When something goes wrong — a collision, a grounding, a missed port — the logbook answers the first question.

Who authorized this?

Now imagine a vessel where the captain engages the autopilot.

No entry in the logbook.

No record of the handoff.

No documentation of what the autopilot was permitted to do.

When something goes wrong, no one can answer.

Who authorized this course?

What constraints was the autopilot given?

Who owns this outcome?

That vessel is your organization.

The autopilot is your AI agent.

The missing logbook entry is the gap that the EU AI Act, your auditors, and your architecture governance will all eventually ask you to fill.

The Governance Gap Nobody Has Mapped

Most organizations have deployed AI agents somewhere.

A copilot that drafts code.

An agent that triages support tickets.

A model that approves low-risk purchase orders.

A workflow that classifies customer sentiment and routes it automatically.

Each of these agents makes decisions.

Some autonomously.

Some within boundaries.

Some without any boundaries at all.

Now ask one question.

Where is the record of who authorized each of these agents to act?

In most organizations the answer is the same.

It does not exist.

What Most Organizations Believe About Agent Governance

Most teams believe AI agent governance is a compliance problem.

Something the legal team handles.

Something that will be addressed with a policy document.

Something that lives in an AI ethics framework attached to no engineering system.

This is the wrong model.

Agent governance is an architecture problem.

Specifically, it is a decision accountability problem.

Who decided this agent could act autonomously?

What constraints were it given?

What happens when it exceeds them?

Who is accountable for its outputs?

These are architectural questions.

They require architectural answers.

And most organizations already have the structure to answer them.

They are just not using it for this purpose.

The Structure You Already Have

Architectural Decision Records exist in most mature engineering organizations.

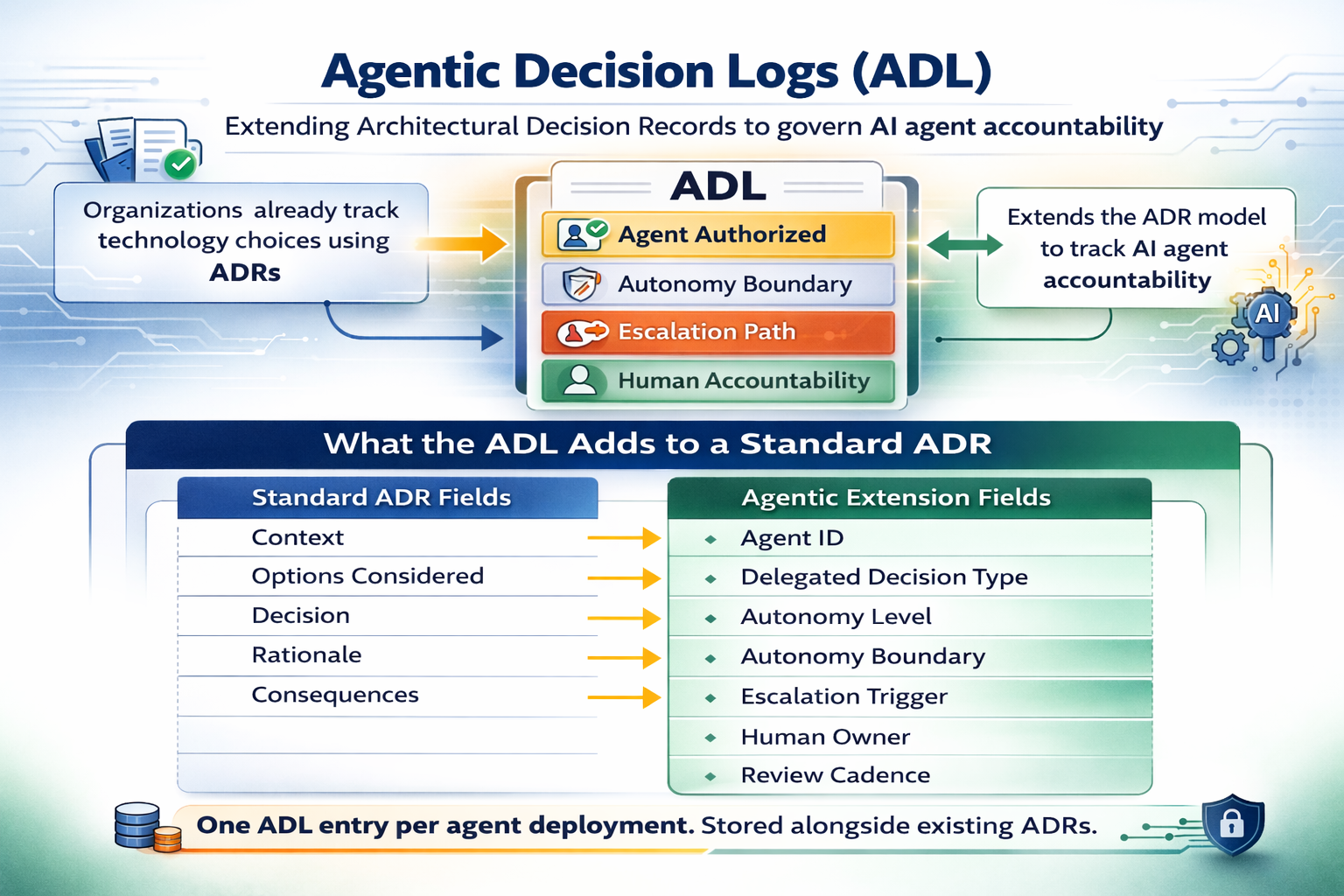

An ADR records:

- the problem being solved

- the options considered

- the decision made

- the rationale behind it

- the consequences accepted

ADRs are used to document decisions about technology choices, integration patterns, platform adoption, and system boundaries.

They are documentation artifacts.

But their mechanical structure — a bounded record of a decision, its authorization, its constraints, and its owner — is precisely the structure needed to govern AI agents.

The organization does not need to build new governance infrastructure.

It needs to extend what it already has.

The Agentic Decision Log (ADL)

The extension of the ADR model into AI agent governance is the Agentic Decision Log.

ADL is not a new framework.

It is the ADR model applied to a new category of decision: the decision to delegate authority to an AI agent.

Where an ADR records why a human team chose one architecture over another, an ADL records:

- which decisions have been delegated to an AI agent

- what autonomy boundary that agent operates within

- what the escalation path is when the agent reaches that boundary

- who the human owner is when the agent is wrong

The ADL does not govern the agent's behavior.

It governs the authorization to act.

The ADL Structure

A complete Agentic Decision Log record contains the following fields alongside the standard ADR structure.

Standard ADR Fields (Retained)

| Field | Content |

|---|---|

| Context | What business problem is this agent solving? |

| Options Considered | What alternatives were evaluated — including human-only execution? |

| Decision | Which agent capability was authorized, and at what scope? |

| Rationale | Why was autonomous operation selected over supervised operation? |

| Consequences | What trade-offs were accepted? What risks were acknowledged? |

Agentic Extension Fields (New)

| Field | Content |

|---|---|

| Agent ID | Unique identifier for this agent deployment |

| Delegated Decision Type | Specific category of decisions the agent is authorized to make |

| Autonomy Level | Full autonomous / Supervised / Human-gated |

| Autonomy Boundary | Explicit constraints — what the agent cannot decide without escalation |

| Escalation Trigger | The conditions that require the agent to stop and involve a human |

| Human Owner | Named individual or role accountable for agent outputs |

| Authorization Date | When this delegation was formally recorded |

| Review Cadence | How often this ADL entry will be reviewed against observed behavior |

The addition is structural, not bureaucratic.

Each new agent capability gets one ADL entry.

The entry answers the questions that every governance review, audit, and incident post-mortem will eventually ask.

The Two Captains

Return to the vessel analogy.

Two organizations deploy the same AI agent for purchase order approval.

Same model.

Same capability.

Same risk profile.

The First Organization

The agent is deployed by the engineering team.

It works.

It processes hundreds of approvals per week.

Nobody asks who authorized it.

Nobody asks what its constraints are.

Nobody asks who owns a wrong approval.

Six months later, the agent approves a purchase order it should have escalated.

The incident review asks three questions.

Who authorized this agent to approve orders of this size?

What constraints was it given?

Who is accountable?

Nobody can answer.

The investigation takes three months.

The audit finding cites a governance failure.

The EU AI Act review classifies the deployment as high-risk with no documented authorization.

The Second Organization

Before deployment, the architect creates ADL-031.

Context: automate approval of purchase orders below a defined threshold.

Agent ID: procurement-agent-v2.

Delegated Decision Type: purchase order approval.

Autonomy Level: supervised.

Autonomy Boundary: orders above the defined threshold require human review.

Escalation Trigger: vendor not in approved list, order exceeds threshold, anomaly score above threshold.

Human Owner: Head of Procurement.

Authorization Date: recorded.

Review Cadence: quarterly.

Six months later, the same incident occurs.

The incident review asks the same three questions.

ADL-031 answers all of them in under two minutes.

The organization corrects the boundary.

Updates the ADL.

Closes the incident.

The audit review finds a governance record in place.

The agent behavior was identical.

The governance outcome was not.

What Breaks When This Is Ignored

When AI agents operate without an Agentic Decision Log:

Accountability collapses at the moment it is needed most.

Incident post-mortems cannot identify authorization chains.

The question "who decided this agent could do this?" produces silence.

Regulatory reviews find no evidence of intentional governance.

Autonomy boundaries drift without record.

An agent deployed with informal constraints gradually expands its scope.

Without a documented boundary, there is no baseline to measure drift against.

The organization discovers the boundary was wrong when the consequence becomes visible.

The EU AI Act creates exposure that is structural, not incidental.

High-risk AI system provisions apply from August 2026.

Organizations that cannot produce documented authorization records for agents operating in regulated domains are not unprepared.

They are already non-compliant.

When the Agentic Decision Log is in place:

Authorization chains exist before incidents require them.

Autonomy boundaries are explicit, reviewable, and versioned.

Governance reviews produce records, not investigations.

Implementation Guide (30–60 Days)

Introducing the Agentic Decision Log does not require new tooling.

It requires extending an existing practice to a new category of decision.

Identify every AI agent currently operating in the organization.

This is not a technical exercise.

It is a governance exercise.

For each agent, ask:

- What decisions does it make without human involvement?

- What constraints, if any, were documented at deployment?

- Who is currently accountable if it produces a wrong output?

Most organizations will find that these questions cannot be cleanly answered for the majority of their deployments.

That gap is the governance finding.

Deliverable: Agent inventory mapped to decision type, autonomy level, and current accountability status

Success Metric: Complete inventory of agent deployments with accountability gaps identified

Phase 2: Create ADL Entries for High-Risk Deployments (Weeks 3–5)

Begin with agents operating in regulated or consequential domains.

Examples include:

- agents making financial decisions above defined thresholds

- agents processing personal data

- agents routing or triaging customer interactions

- agents generating content published without human review

For each, create a complete ADL entry using the extended ADR structure.

Store ADL entries alongside standard ADRs in the existing architecture decision repository.

Deliverable: ADL entries for all high-risk agent deployments

Success Metric: Each high-risk agent has a named human owner, documented autonomy boundary, and escalation trigger on record

Phase 3: Embed ADL into Agent Deployment Governance (Weeks 6–8)

Require an ADL entry as a condition of any new agent capability deployment.

Integrate ADL review into the existing architecture governance cycle.

At each quarterly review, compare documented autonomy boundaries against observed agent behavior.

Where behavior has drifted outside the documented boundary, treat the gap as an architecture finding.

Deliverable: ADL requirement embedded in agent deployment process

Success Metric: No new agent capability deployed without a corresponding ADL entry

Evidence from Practice

Organizations that run an agent inventory for the first time encounter the same finding.

The number of active agent deployments is larger than architecture governance was aware of.

Not because teams acted recklessly.

But because the deployment threshold was low and the governance path for agents was undefined.

Every team that deployed an agent believed governance was someone else's responsibility.

The second finding is consistent.

Almost no deployments have documented autonomy boundaries.

Agents were given capabilities.

Not constraints.

The boundary that the organization assumed existed was never written down.

The third finding is the consequential one.

When accountability is tested — by an incident, an audit, or a regulatory review — the organization discovers that the agent governance structure it believed it had does not exist in any form that can be shown to anyone.

The ADL does not prevent agent errors.

It prevents the governance failure that follows them.

Action Plan

This Week

Ask three questions:

- Can you list every AI agent currently making decisions in your organization without human review of each output?

- For each of those agents, is there a documented record of who authorized it to act and what it cannot do autonomously?

- If one of those agents produces a consequential error today, can you answer within two minutes: who authorized this, and who owns the outcome?

If these answers are unclear, agent governance may be operating from assumption rather than record.

Next 30 Days

Select the three agents with the highest consequence if wrong.

Create an ADL entry for each.

Name a human owner for each agent's outputs.

Document the autonomy boundary and escalation trigger.

Store the entries in your existing ADR repository.

That repository now contains both architectural decisions and agent accountability records.

3–6 Months

Extend ADL coverage to all agent deployments.

Introduce ADL creation as a required step in any agent capability deployment.

Review ADL entries quarterly against observed agent behavior.

Where behavior has exceeded documented boundaries, treat it as an architecture drift finding — the same category you would apply to any other system that has diverged from its design intent.

Agent governance becomes reliable when authorization is recorded before it is needed.

Final Thought

The captain engaged the autopilot.

No logbook entry.

No documented handoff.

No record of what the autopilot was permitted to do.

The autopilot kept flying.

The organization kept deploying agents.

Both assumed the constraint was understood.

Neither wrote it down.

The ADR model already exists in your organization.

The structure already knows how to record a decision, name an owner, and document a constraint.

The only question is whether you point it at the decisions your agents are making on your behalf.

Establish Agent Accountability Before an Incident Requires It

If AI agents are operating in your organization without documented authorization records…

if autonomy boundaries exist as assumptions rather than architecture…

or if an agent incident would leave your governance team unable to answer who authorized this —

your architecture may be governing systems, but not the agents acting inside them.

In a focused 30-minute Agentic Governance Diagnostic, we will:

- Inventory your current agent deployments against documented accountability records

- Identify which agents are operating outside any recorded authorization boundary

- Introduce a practical ADL structure compatible with your existing ADR practice

- Define a 30-day plan to bring your highest-risk agent deployments into documented governance

No new governance infrastructure.

No compliance theater.

No frameworks that exist only in slide decks.

Just a governance record that answers the question before an incident asks it.

→ Book an Architecture Strategy Session

or

The agent is acting on your behalf.

The logbook entry is missing.

The ADL puts it back.